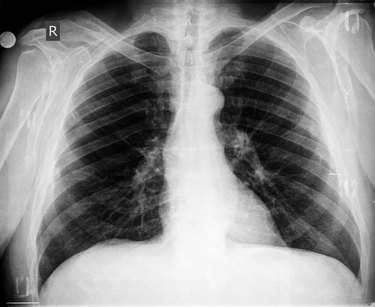

At our human body page, we provide detailed information on the structure and function of various systems within the body, including the circulatory, digestive, nervous and respiratory systems. We also offer insights into how these systems interact with one another and how they work together to maintain overall health.

Our team of medical experts provides in-depth articles on specific organs and their functions, as well as general information on maintaining a healthy body through nutrition, exercise and lifestyle choices. We also offer practical advice on managing common health concerns, such as stress, sleep disorders and chronic diseases.

Our human body page is a great resource for individuals of all ages and backgrounds. Whether you are a student learning about the human body for the first time or an adult looking to improve your health and wellness, our page has something for you."